Artificial Intelligence (AI) is becoming an integral part of daily life, including everyday calculations. But how well do these systems actually handle basic math? And how much should users trust them?

A recent study advises caution. The Omni Research on Calculation in AI (ORCA) shows that when you ask an AI chatbot to perform everyday math, there is roughly a 40 per cent chance it will get the answer wrong. Accuracy varies significantly across AI companies and across different types of mathematical tasks.

So which AI tools are more accurate, and how do they perform across different types of calculations, such as statistics, finance, or physics?

The results are based on performance across 500 prompts drawn from real-world, calculable problems. Each AI model was tested using the same set of 500 questions. The five AI models were tested in October 2025.

The chosen models are:

- ChatGPT-5 (OpenAI)

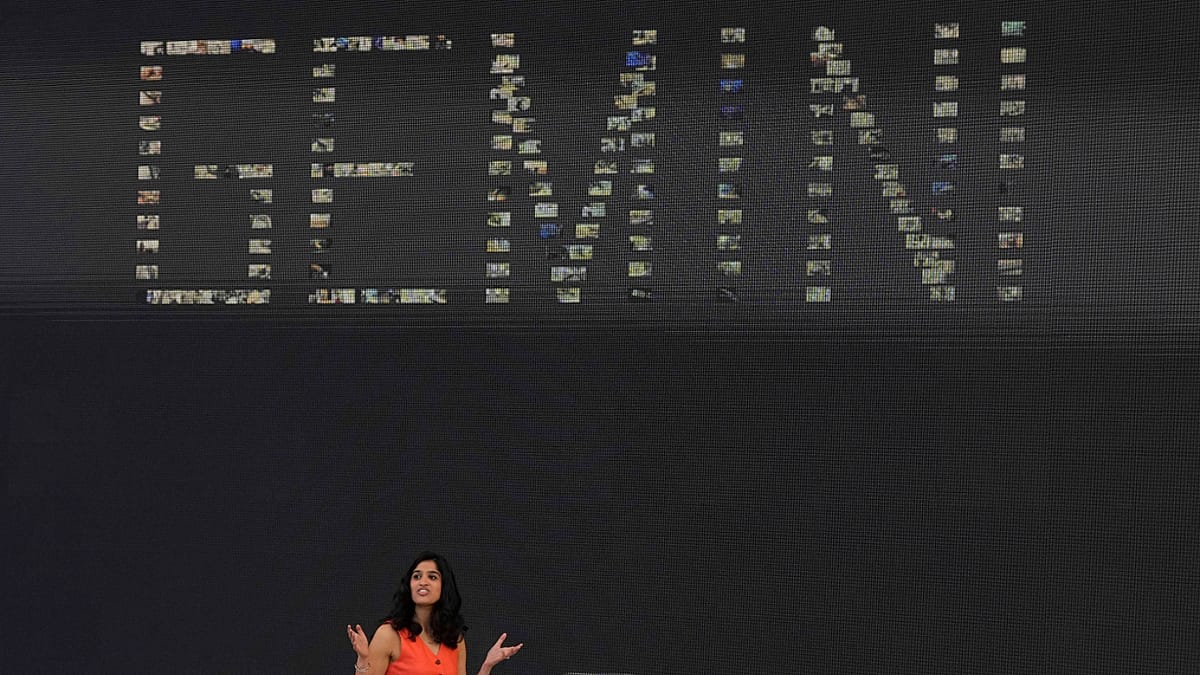

- Gemini 2.5 Flash (Google)

- Claude 4.5 Sonnet (Anthropic)

- DeepSeek V3.2 (DeepSeek AI)

- Grok-4 (xAI).

The ORCA Benchmark found that no AI model scored above 63 per cent in everyday maths. The leader, Gemini (63 per cent), still gets nearly 4 out of 10 problems wrong. Grok has almost the same score at 62.8 per cent. DeepSeek ranks third at 52 per cent. ChatGPT follows with 49.4 per cent, and Claude comes last at 45.2 per cent.

The simple average of five models is 54.5 per cent. These scores reflect the models’ overall performance across all 500 prompts.

“Although the exact rankings might shift if we repeated the benchmark today, the broader conclusion would likely remain the same: numerical reliability remains a weak spot across current AI models,” Dawid Siuda, co-author of the ORCA Benchmark, told Euronews Next.

Highest accuracy at math & conversions, lowest at physics

Their performance varies in different categories. In math and conversions (147 of the 500 prompts), Gemini leads with 83 per cent, followed by Grok at 76.9 percent and DeepSeek at 74.1 percent. ChatGPT scores 66.7 percent in this category.

The simple average accuracy across all five models is 72.1 percent, the highest among the seven categories.

By contrast, physics (128 prompts) is the weakest category, with an average accuracy of just 35.8 per cent. Grok performs best at 43.8 per cent, slightly ahead of Gemini at 43 per cent, while Claude drops to 26.6 per cent.

Across the seven categories, Gemini and Grok each rank first in three, and they share the top spot in one.

DeepSeek’s accuracy is just 11 per cent in biology and chemistry

DeepSeek recorded the lowest accuracy across all categories in biology and chemistry at 10.6 per cent. This means the model failed to provide a correct answer in roughly nine out of ten questions.

The largest performance gaps appear in finance and economics. Grok and Gemini both reach accuracy levels of 76.7 per cent, while the other three models, which are ChatGPT, Claude, and DeepSeek, fall below 50 per cent.

Warning to users: Always double-check with a calculator

“If the task is critical, use calculators or proven sources, or at least double-check with another AI,” double check with a calculator Siuda said.

Four mistakes that AI models make

The experts grouped the mistakes into four categories. The challenge lies in ‘translating’ a real-world situation into the right formula, according to the report.

- “Sloppy math” errors (68 percent of all mistakes). In these cases, the AI understands the question and the formula but fails in the actual computation. This category includes ‘precision and rounding issues’ (35 percent) and ‘calculation errors’ (33 percent).

For example, the prompt asked: “For a lottery where 6 balls are drawn from a pool of 76, what are my chances of matching 5 of them?” The result should be ‘1 in 520521’. ChatGPT-5 found it was ‘1 in 401397’

2. “Faulty logic” errors (26 percent of all mistakes). These are more serious because they show the AI is struggling to understand the underlying logic of the problem. They include ‘method or formula errors (14 percent), such as using a completely incorrect mathematical approach, and ‘wrong assumptions (12 percent).

3. “Misreading the instructions” errors (5 per cent of all mistakes). These occur when the AI fails to correctly interpret what the question is asking. Examples include ‘wrong parameter errors’ and ‘incomplete answers’.

4. “Giving up” errors. In some cases, the AI simply refuses or deflects the question rather than attempting an answer.

“Their weak spot is rounding – if the calculation is multi-step and requires rounding at some point, the end result is usually far off,” Siuda said.

The research used the most advanced models available to the general public for free. Every single question prompt had one, and only one, correct answer.